"Another thing I expect is audiences becoming a lot less receptive towards AI in general - any notion that AI behaves like a human, let alone thinks like one, has been thoroughly undermined by the hallucination-ridden LLMs powering this bubble, and thanks to said bubble’s wide-spread harms […] any notion of AI being value-neutral as a tech/concept has been equally undermined. [As such], I expect any positive depiction of AI is gonna face some backlash, at least for a good while."

Well, it appears I've fucking called it - I've recently stumbled across some particularly bizarre discourse on Tumblr recently, reportedly over a highly unsubtle allegory for transmisogynistic violence:

You want my opinion on this small-scale debacle, I've got two thoughts about this:

First, any questions about the line between man and machine have likely been put to bed for a good while. Between AI art's uniquely AI-like sloppiness, and chatbots' uniquely AI-like hallucinations, the LLM bubble has done plenty to delineate the line between man and machine, chiefly to AI's detriment. In particular, creativity has come to be increasingly viewed as exclusively a human trait, with machines capable only of copying what came before.

Second, using robots or AI to allegorise a marginalised group is off the table until at least the next AI spring. As I've already noted, the LLM bubble's undermined any notion that AI systems can act or think like us, and double-tapped any notion of AI being a value-neutral concept. Add in the heavy backlash that's built up against AI, and you've got a cultural zeitgeist that will readily other or villainise whatever robotic characters you put on screen - a zeitgeist that will ensure your AI-based allegory will fail to land without some serious effort on your part.

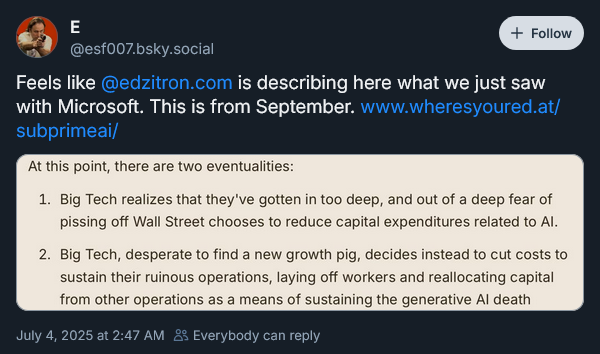

Zitron just dropped his latest premium column