this post was submitted on 12 Jun 2024

1327 points (98.7% liked)

Memes

50684 readers

661 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 6 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Worst one is probably Apple. They just announced "Apple Intelligence" which is just ChatGTP whose largest shareholder is Microsoft. Figure that one out.

Well, most of the requests are handled on device with their own models. If it’s going to ChatGPT for something it will ask for permission and then use ChatGPT.

So the Apple Intelligence isn’t all ChatGPT. I think this deserves to be mentioned as a lot of the processing will be on device.

Also, I believe part of the deal is ChatGPT can save nothing and Apple are anonymising the requests too.

chatgpt won't save anything? Doubtful.

Brother I do not care about your doubts.

I want hard facts here.

Do you think that if you enter into a contract with a company like Apple they’ll just be like, aww shit they weren’t supposed to do that. Anyway let’s carry on.

No. This would open OpenAi up to potential lawsuits.

Even if they did save stuff. It gets anonymised by Apple before even being sent to ChatGPT servers.

The hard fact is OpenAI is already exposing itself to lawsuits by training on copyrighted material.

So the proof here should be "what makes them trustworthy this time?"

Because Apples lawyers will go ham.

I don’t want my comments here to be received as shilling Apple, more that I want them to based on actual information that is provided and not opinion pieces.

The fact is, if they were to caught saving data then Apple would just end the contract. Is it worth it for them to lose out on that cash, for the sake of using it. When they can just use all the other sources where they are allowed to do that.

Anyway, I don’t care what anonymised data they may or may not save. It won’t be tied to me.

Edit: Do you have some information on this existing lawsuits and the contracts they broke?

Google pays Apple $20 billion a year to keep their search on Apple devices. The subtext of "search" is Google pays Apple for your search data.

Apple has sold your data for the right price to Google, so there should be no expectation that they won't do the same with other companies.

They sell Google the right to keep it as the default, not that they’re selling data.

Again, point me to some proof of it being actually selling data. As to my understanding they pay for the default engine to be Google.

That Google is the search engine means Google gets that valuable search data. So they pay to be the default search engine to get your data.

Sure, but let’s be honest. Even if it wasn’t the majority of people are still using Google anyway.

I prefer Arc Search myself.

There's kind of a difference between "we scraped the internet and decided to use copyrighted content anyways because we decided to interpret copyright law as not being applicable to the content we generate using copyrighted content" (omegalul) and "we explicitly agreed to a legally-binding contract with Apple stating we won't do that".

thing is apple doesnt give a shit about ur privacy

Finally, a reasonable comment.

I would concede that they want to keep it all for themselves, although a lot of anonymising of data is done.

My point is Apple are not sharing it with every third party on the Earth.

If you’re using Android then you don’t really have a leg to stand on, unless you’re using GrapheneOS and you’ve sandboxed Google services.

I would rather use a device that maybe keeps it all for themselves. Rather than one where it is shared with Everyman and his dog.

Plenty of things you can shit on Apple for, but this isn’t one of them I’m afraid.

careful, that's a hardcore tankie troll you replied to.

Doubt.

Voice recognition, image recognition, yes. But actual questions will go to Apple servers.

Is this conjecture or can you provide some further reading, in the interest of not spreading misinformation.

Edit: I decided to read the info from Apple.

Say what you will about Apple, but privacy isn’t a concern for me. Perhaps, some independent experts will verify this in time.

Which is exactly what I said. It's not local.

That they are keeping the data you send private is irrelevant to the OP claim that the AI model answering questions is local.

OP here being me.

I feel I was pretty explicit in explaining how some requests will go to ChatGPT.

Apple has published papers on small LLM models and multimodal models already. I would be surprised if they aren't using them for on-device processing.

If you think that’s the WORST ONE, you have no idea about any of this

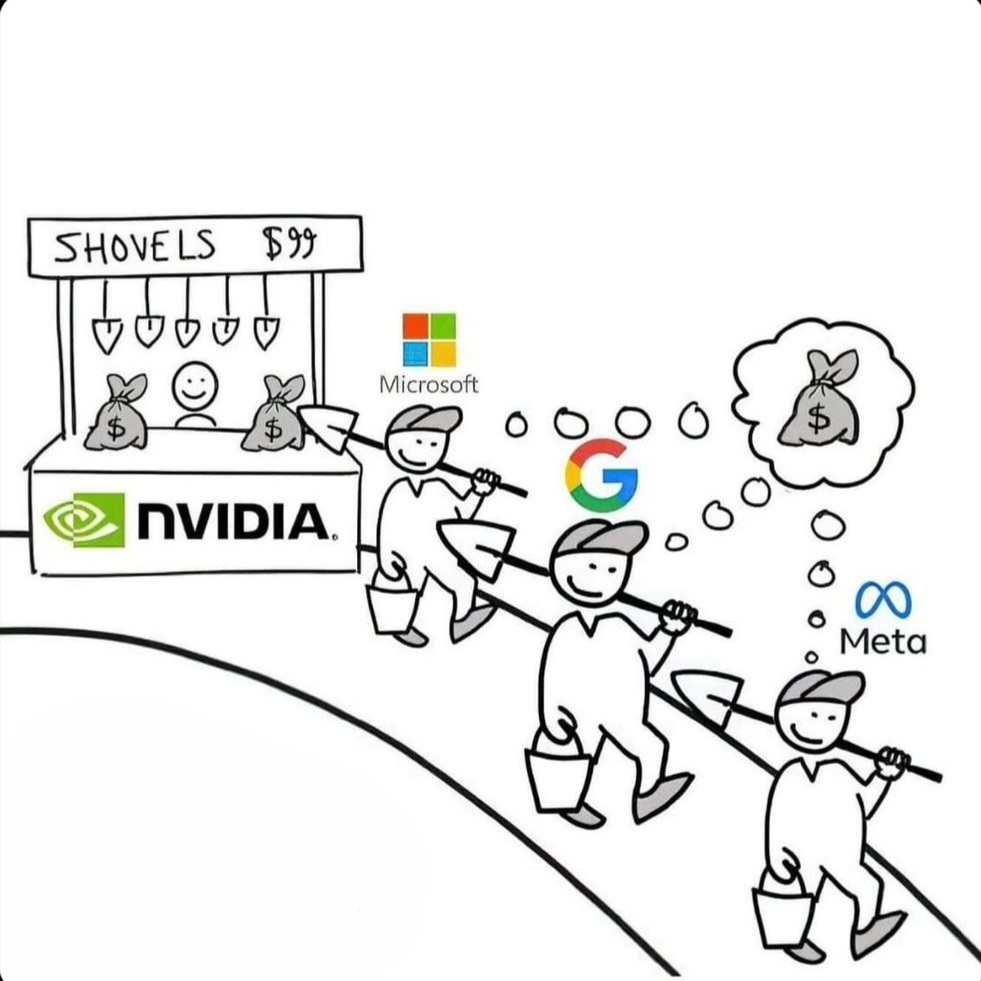

Yeah, if anything, Apple is behind the curve. Nvidia/AMD/Intel have gone full cocaine nose dive into AI already.

Not true. Most if not all requests are handled by apples own models on device or on their own servers. When it does use OpenAI you need to give it permission each time it does.

That's just not true. Most requests are handled on-device. If the system decides a request should go to ChatGPT, the user is promped to agree and no data is stored on OpenAI's servers. Plus, all of this is opt-in.

Literally impossible.

"Hey Siri, what's the weather forecast for tomorrow."

< The Farmer's Almanac that is in my local model says it will rain tomorrow. >

I think there’s a larger picture at play here that is being missed.

Getting the weather is a standard feature for years now. Nothing AI about it.

What is “AI” is,

Hey Siri, what is the weather at my daughter’s recital coming up?The AI processing, calculated on-device if what they claim is true, is:

Well {Your phone contact name}, it looks like it will {remote weather response} during your {calendar event from phone} with {daughter from contacts} on {event date}.That is the idea between on-device and cloud processing. The phone already has your contacts and calendar and does that work offline rather than educating an online server about your family, events and location, and requests the bare minimum from the internet, in this case nothing more than if you opened the weather app yourself and put in a zip code.

Voice processing is AI and was done by Apple servers. Previously, only the keyword "Hey Siri" was local. Onboard AI chips will allow this to be local. The actual queries will go to the servers. Phones do not have the power to run useful LLM locally- at least not with the near instantaneous response times phone users expect. A 56 Watt 128GB RAM M3 Max does around 8.5 tokens/second.

https://www.nonstopdev.com/llm-performance-on-m3-max/

Perhaps this is why these features will only be available on iPhone 15 Pro/Max and newer? Gotta have those latest and greatest chips.

It will be fun to see how it all shakes out. If the AI can’t run most queries on the phone with all this advertising of local processing…there’ll be one hell of a lawsuit coming up.

EDIT: Finished looking for what I thought I remembered…

Additionally, Siri has been locally processed since iOS 15.

https://www.macrumors.com/how-to/use-on-device-siri-iphone-ipad/

I'm not guessing. I linked to the article about the M3 which is much more powerful than the a17 pro in the 15 pro and has the same NPU.

Forgive me, I’m no AI expert to fully compare the needed tokens per second measurement to relate to the average query Siri might handle, but I will say this:

Even in your article, only the largest model ran at 8/tps, others ran much faster, and none of these were optimized for a task, just benchmarking.

Would it be impossible for Apple to be running an optimized model specific to expected mobile tasks, and leverage their own hardware more efficiently than we can, to meet their needs?

I imagine they cut out most worldly knowledge etc/use a lightweight model, which is why there is still a need to link to ChatGPT or Apple for some requests, would this let them trim Siri down to perform well enough on phones for most requests? They also advertised launching AI on M1-2 chip devices, which are not M3-Max either…

Literally not what people are talking about. It's the "AI" part of the task that doesn't leave the device (unless it prompts to ask chat gpt). Not that it can magically gleam live info without making any request to the web...

Jeeze, fucking... get your shit straight, making me defend Apple... Fucking do better.

The "AI" parts are what they're saying happens on the device. This isn't a gotcha.