this post was submitted on 27 Jun 2024

819 points (95.2% liked)

Science Memes

12557 readers

3047 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- !abiogenesis@mander.xyz

- !animal-behavior@mander.xyz

- !anthropology@mander.xyz

- !arachnology@mander.xyz

- !balconygardening@slrpnk.net

- !biodiversity@mander.xyz

- !biology@mander.xyz

- !biophysics@mander.xyz

- !botany@mander.xyz

- !ecology@mander.xyz

- !entomology@mander.xyz

- !fermentation@mander.xyz

- !herpetology@mander.xyz

- !houseplants@mander.xyz

- !medicine@mander.xyz

- !microscopy@mander.xyz

- !mycology@mander.xyz

- !nudibranchs@mander.xyz

- !nutrition@mander.xyz

- !palaeoecology@mander.xyz

- !palaeontology@mander.xyz

- !photosynthesis@mander.xyz

- !plantid@mander.xyz

- !plants@mander.xyz

- !reptiles and amphibians@mander.xyz

Physical Sciences

- !astronomy@mander.xyz

- !chemistry@mander.xyz

- !earthscience@mander.xyz

- !geography@mander.xyz

- !geospatial@mander.xyz

- !nuclear@mander.xyz

- !physics@mander.xyz

- !quantum-computing@mander.xyz

- !spectroscopy@mander.xyz

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and sports-science@mander.xyz

- !gardening@mander.xyz

- !self sufficiency@mander.xyz

- !soilscience@slrpnk.net

- !terrariums@mander.xyz

- !timelapse@mander.xyz

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

After reading this, I have decided that I am no longer going to provide a formal proof for my other point, because odds are that you wouldn't understand it and I'm now reasonably confident that anyone who would already understands the fact the proof would've supported.

Ah, typo. 1/3 ≠ 0.333...

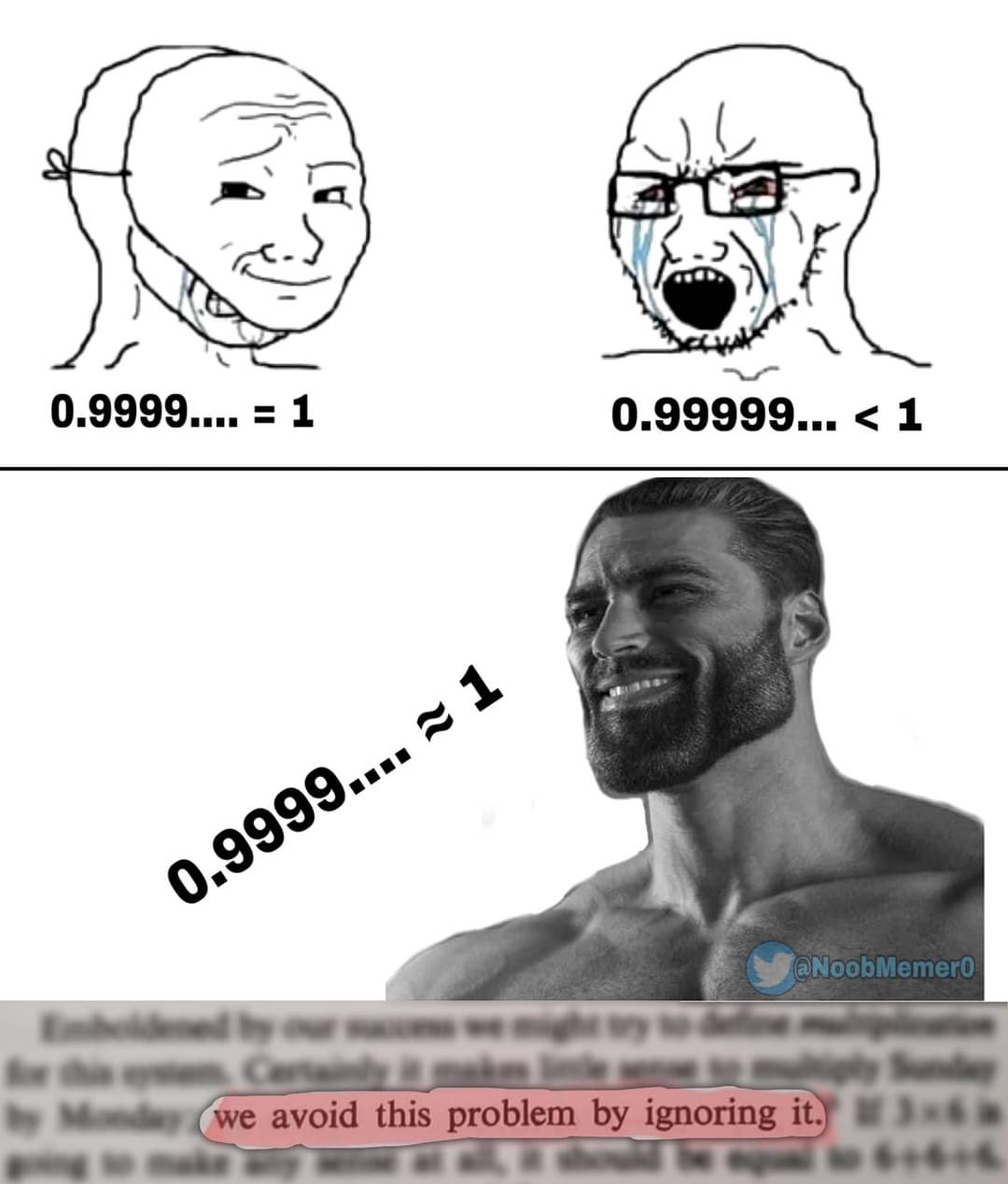

It is my opinion that repeating decimals cannot properly represent the values we use them for, and I would rather avoid them entirely (kinda like the meme).

Besides, I have never disagreed with the math, just that we go about correcting people poorly. I have used some basic mathematical arguments to try and intimate how basic arithmetic is a limited system, but this has always been about solving the systemic problem of people getting caught by 0.999... = 1. Math proofs won't add to this conversation, and I think are part of the issue.

Is it possible to have a coversation about math without either fully agreeing or calling the other stupid? Must every argument about even the topic be backed up with proof (a sociological one in this case)? Or did you just want to feel superior?

Your opinion is incorrect as a question of definition.

You had in the previous paragraph.

Yes, however the problem is that you are speaking on matters that you are clearly ignorant. This isn't a question of different axioms where we can show clearly how two models are incompatible but resolve that both are correct in their own contexts; this is a case where you are entirely, irredeemably wrong, and are simply refusing to correct yourself. I am an algebraist understanding how two systems differ and compare is my specialty. We know that infinite decimals are capable of representing real numbers because we do so all the time. There. You're wrong and I've shown it via proof by demonstration. QED.

They are just symbols we use to represent abstract concepts; the same way I can inscribe a "1" to represent 3-2={ {} } I can inscribe ".9~" to do the same. The fact that our convention is occasionally confusing is irrelevant to the question; we could have a system whereby each number gets its own unique glyph when it's used and it'd still be a valid way to communicate the ideas. The level of weirdness you can do and still have a valid notational convention goes so far beyond the meager oddities you've been hung up on here. Don't believe me? Look up lambda calculus.